The situation

A small-but-real wellness mobile app was generating multi-million-dollar revenue through an iOS app and a PHP backend that the founder did not understand and could not run.

The entire backend codebase lived on a single contractor’s laptop and on the production server — never checked into source control, never shared. Backups were ad-hoc. There was no documentation, no test environment, and no automated tests. The iOS app was at least in version control, but the founder had no way to validate that the code being written was the code being shipped, or to rebuild the platform if the contractor walked away.

He had built a real business on a single person’s dev skills and goodwill. He stressed that he could lose it overnight — a hard drive failure, a dispute with the contractor, a routine outage with no failover — with no way to restore service to users in time.

Why they called us

The Founder found Develomentor on a freelancing site. He was in the Raleigh-Durham area at the time, and so was Grant — meeting in person mattered for working through a stack he had never seen.

The deciding factors were availability, fair pricing, and credibility: Grant had co-founded a venture-backed startup and led engineering at scale, which signaled he could read both the code and the situation around it. The alternative — hiring a full-time CTO, or another contractor on the same model that created the problem — would have been slower, more expensive, and more of the same.

What we did

Four workstreams over roughly 30 hours of engaged time, run as a part-time engagement with a mix of remote work and in-person sessions.

1. Source-control rescue and reproducibility

Recovered the backend PHP code from the outsourced developer — it had never been checked in — and got both the backend and the iOS Swift frontend into the same Bitbucket organization. Containerized the backend with Docker and wrote READMEs so the entire stack could be built and run from a clean machine. Walked the founder through it; he ran both apps end-to-end on his own laptop by the end of the session.

2. Code review and best-practices assessment

Reviewed the codebase end-to-end and surfaced the real risks: no fault tolerance (single VPS, no failover), ad-hoc database backups, weak version-control practices, no automated tests, shared test/production database, no issue tracking, unencrypted traffic with openly-downloadable premium audio, and weak usage analytics.

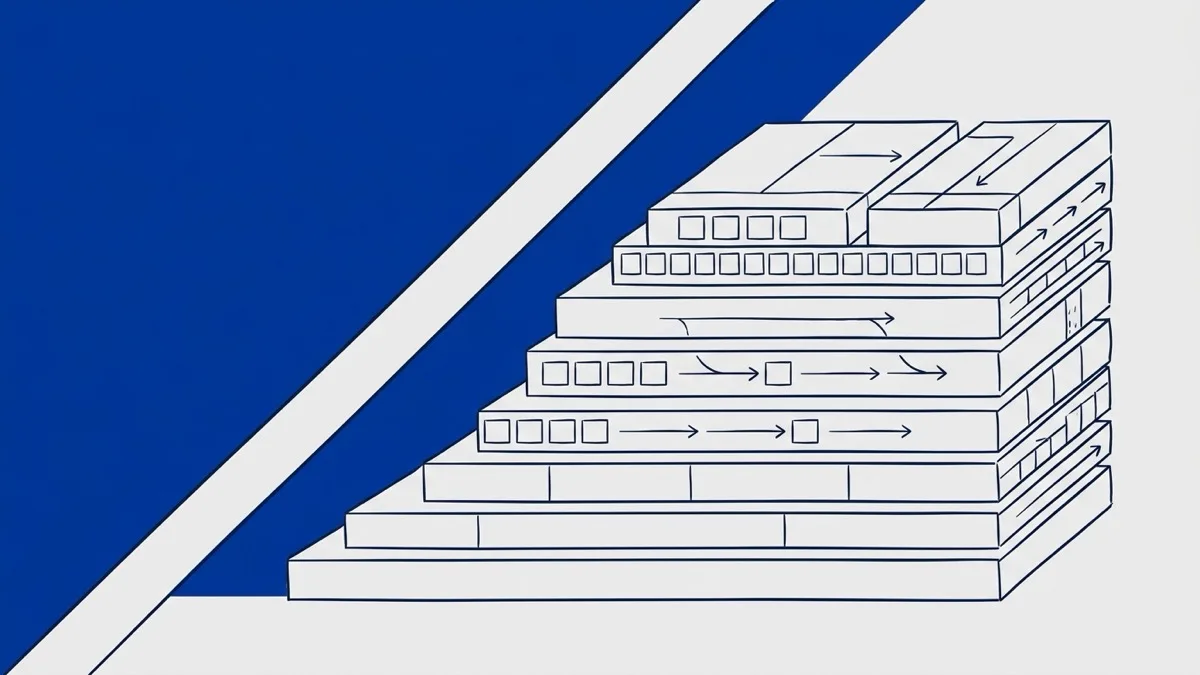

3. Load testing and performance baseline

Started with the system itself — walking the production access logs to estimate the concurrency the platform actually had to hold up under, rather than guessing from intuition. From there, built a repeatable load test against the four highest-traffic user flows (homescreen, free-episode list, playlist load, episode download) and stepped concurrency up in stages.

Two bottlenecks emerged. The playlist endpoint degraded the fastest as concurrency climbed; reading the code showed several database joins and post-processing loops that were the cause. Audio delivery degraded next — serving large MP3 files from the same server as the rest of the API put avoidable contention on the box.

That fed a fix plan rather than a list of numbers. Playlist needed code work to optimize the database interactions. Audio could likely be solved without code at all, by putting the existing hosting provider’s CDN in front of the MP3 files. Each fix was scoped, ordered, and handed back as something the founder could fund or hand to a contractor.

4. Prioritized recommendations report

Delivered a written assessment with high- and medium-priority next steps the founder could action immediately or hand to a contractor — each costed in rough hours so the work could be scoped into a budget rather than left as a wishlist.

The result

A multi-million-dollar mobile revenue stream — previously dependent on a single undocumented deploy process, with no backups, no documentation, and no tested recovery path — was put on durable footing in roughly 30 hours.

- Backend recovered from the contractor and checked into Bitbucket alongside the iOS code; the founder personally demonstrated running both apps end-to-end on his own machine.

- First performance baseline the company ever had — repeatable load test against the four highest-traffic user flows, run well above measured peak production load to surface where the system would break first as the business grew, with a costed fix plan for each bottleneck.

- Five highest-risk gaps and four secondary issues identified, prioritized, and costed.

- Docker-based local environment so any future engineer can stand up the full stack in minutes, eliminating the contractor lock-in that was the original existential risk.

- Contractor-management playbook for future engagements: require build docs, require a live demo of any deliverable, and never accept code that does not run on the founder’s own machine.

The founder went from zero visibility into his own platform to being able to demonstrate, deploy, and reason about it without the original contractor.

What this means for you

If you are running real revenue on a product that an outsourced developer or a no-code or AI tool built for you — and you have realized that you do not actually know how it works, where it lives, who has the keys, or what would happen if the person you are paying disappeared tomorrow — this case is for you.

It is fixable in days, not months. You do not need to hire a full-time CTO to fix it. You need a senior, independent voice who can read your stack, name the real risks, and get you back in control of your own platform.

Most engagements like this start as a fixed-scope review with a written deliverable, and convert into ongoing fractional CTO work once the immediate risk is contained. See the engagement structures on Founders & Small Teams for what that looks like in practice.

Tell us where you are. Book a Discovery Call.